Many say MMMs are correlational in nature. Well statistically it is not completely true. When people say correlation they normally have the bivariate Pearson correlation in mind.

What happens in MMM is much more than just bivariate correlation. Multi linear regression like MMM are technically conditional correlations.

Correlations can be useful in limited scope but stretching its interpretation is what leads to trouble.

𝐈𝐧𝐟𝐨𝐫𝐦𝐚𝐭𝐢𝐨𝐧 𝐭𝐡𝐞𝐨𝐫𝐞𝐭𝐢𝐜 𝐛𝐚𝐬𝐞𝐝 𝐌𝐌𝐌𝐬

At Aryma Labs, we started doing research into information theoretic based approaches nearly 4 years ago.

Our motivation to pursue information theoretic methods arose from flaws of correlation :

1. Correlation assumes linear relationship

In real world setting, the relationship between variables are hardly linear. Assuming a linear relationship means you are not factoring in a lot of information on how one variables affects the other.

2. Correlation is prone to outliers.

If you see any marketing spends data, you are bound to see some outliers. (See my post on what is an outlier in resources).

Therefore relying only on correlation for MMM is inefficient.

Enter Information theoretic approaches.

Here is how we have leveraged Information theoretic approaches.

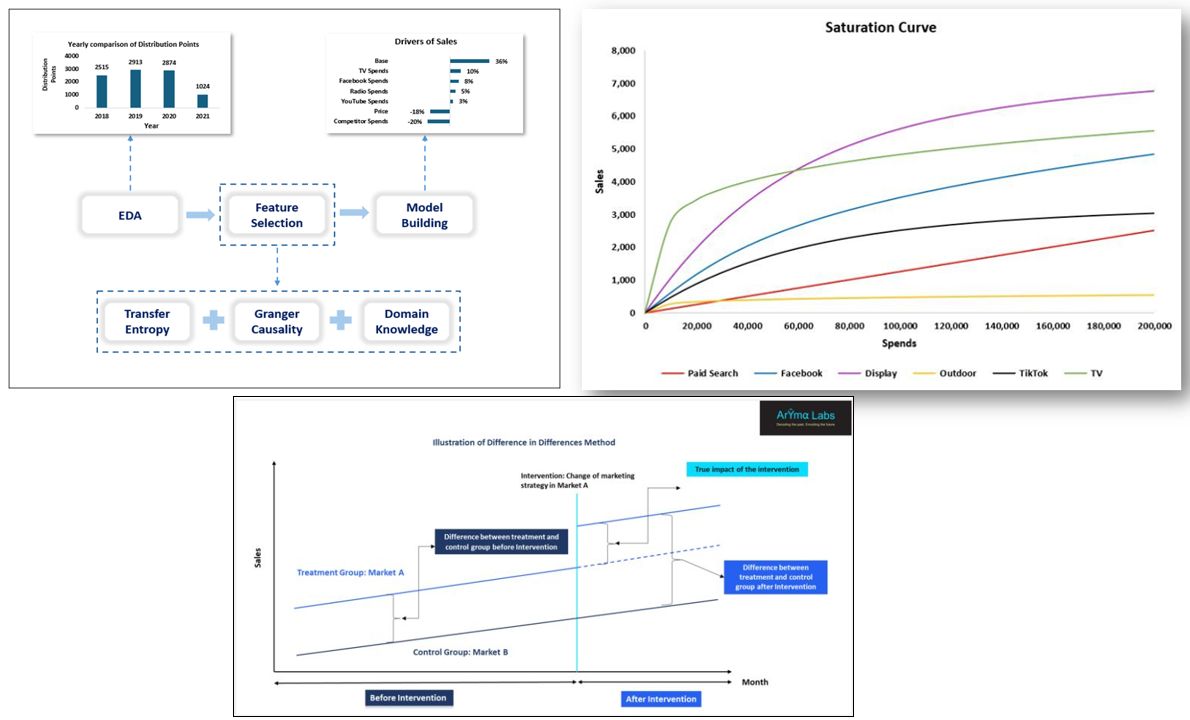

▪ Feature Selection

We leverage Transfer Entropy and Granger causality to do feature selection (link in resources)

▪ Model fitting

We use Transfer Entropy and KL divergence to ascertain the right adstock value. We also found out through our research that saturation points of media can be identified through Transfer Entropy (link in resources).

▪ Model validation

Aryma labs has pioneered the usage of Difference in Difference (DID) technique to validate the MMM models (link in resources). We are currently actively researching how we can reduce bias in DID through entropy balancing and matching.

𝐌𝐚𝐤𝐢𝐧𝐠 𝐭𝐡𝐞 𝐜𝐚𝐮𝐬𝐚𝐥 𝐣𝐮𝐦𝐩

Yes correlation is not causation. But one should also not forget that correlation is a condition for causation but not a sufficient one at that.

Anyhow making causal claims just based on correlation is a quantum leap. However, making causal claims from information theoretic approach is perhaps just a skip.

We have found through our research that information theoretic approaches lends more to causality than correlation alone. (link to relevant papers in resources).

Overall, leveraging information theoretic methods have enabled us to deliver models that are not only statistically accurate but are also practically relevant.

And not to mention, it has greatly enabled us to add causal inference into our MMM models.

Resources:

Transfer Entropy :

https://www.arymalabs.com/identification-of-saturation-points-in-mmm-through-transfer-entropy/

Feature selection :

https://www.linkedin.com/posts/ridhima-kumar7_novel-trifecta-approach-to-feature-selection-activity-7156260192383377408-hXv_?utm_source=share&utm_medium=member_desktop

DID :

https://www.arymalabs.com/proving-efficacy-of-mmm-through-difference-in-difference-did/

What is an outlier : –

https://bit.ly/3Ibbiyb

Is information theory inherently a theory on causation :

https://arxiv.org/abs/2010.01932#:~:text=Information%20theory%20gives%20rise%20to,associations%20between%20variables%20as%20tensors.

Granger causality and transfer entropy are equivalent for Gaussian variables :

https://arxiv.org/abs/0910.4514